[ by Charles Cameron — games, games, games — & prepping a challenge for AI, the analytic community & CNA ]

.

Playing go, Hasegawa, Settei, 1819-1882, Library of Congress

**

In the past, computers have won such games as Pong and Space Invaders:

Google’s AI system, known as AlphaGo, was developed at DeepMind, the AI research house that Google acquired for $400 million in early 2014. DeepMind specializes in both deep learning and reinforcement learning, technologies that allow machines to learn largely on their own. Previously, founder Demis Hassabis and his team had used these techniques in building systems that could play classic Atari videos games like Pong, Breakout, and Space Invaders. In some cases, these system not only outperformed professional game players. They rendered the games ridiculous by playing them in ways no human ever would or could. Apparently, this is what prompted Google’s Larry Page to buy the company.

Wired, Google’s Go Victory Is Just a Glimpse of How Powerful AI Will Be

I can’t corral all the games they’ve played into a single, simple timeline here, because the most interesting discussion I’ve seen is this clip, which moves rapidly from Backgammon via Draughts and Chess to this last few days’ Go matches:

Jeopardy should dfinitely be included somewhere in there, though:

Facing certain defeat at the hands of a room-size I.B.M. computer on Wednesday evening, Ken Jennings, famous for winning 74 games in a row on the TV quiz show, acknowledged the obvious. “I, for one, welcome our new computer overlords,†he wrote on his video screen, borrowing a line from a “Simpsons†episode.

What’s up next? It seems that suggestions included Texas Hold’em Poker and the SAT:

Artificial intelligence experts believe computers are now ready to take on more than board games. Some are putting AI through the ringer with two-player no-limit Texas Hold’ Em poker to see how a computer fairs when it plays against an opponent whose cards it can’t see. Others, like Oren Etzioni at the Allen Institute for Artificial Intelligence, are putting AI through standardized testing like the SATs to see if the computers can understand and answer less predictable questions.

LA Times, AlphaGo beats human Go champ for the third straight time, wins best-of-5 contest

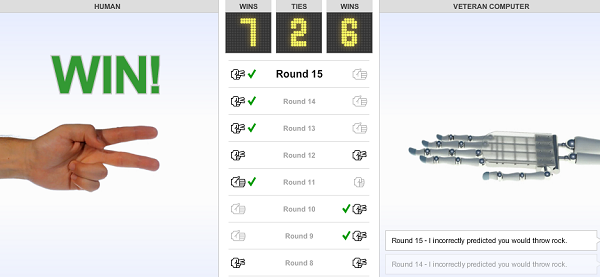

And of course, there’s Rock, Paper, Scissors, which you can still play on the New York Times:

**

Now therefore:

In a follow-up post I want to present what in my view is a much tougher game-challenge to AI than any of the above, namely Hermann Hesse‘s Glass Bead Game, which is a major though not entirely defined feature of his Nobel-winning novel, Das Glasperlenspiel, also known in English as The Glass Bead Game or Magister Ludi.

I believe a game such as my own HipBone variant on Hesse’s would not only make a fine challenge for AI, but also be of use in broadening the skillset of the analytic community, and a suitable response also to the question recently raised on PaxSIMS: Which games would you suggest to the US Navy?

As I say, though, this needs to be written up in detail as it applies to each of those three projects — work is in progress, see you soon.

**

Edited to add:

And FWIW, this took my breath away. From The Sadness and Beauty of Watching Google’s AI Play Go:

At first, Fan Hui thought the move was rather odd. But then he saw its beauty.

“It’s not a human move. I’ve never seen a human play this move,†he says. “So beautiful.†It’s a word he keeps repeating. Beautiful. Beautiful. Beautiful.

The move in question was the 37th in the second game of the historic Go match between Lee Sedol, one of the world’s top players, and AlphaGo, an artificially intelligent computing system built by researchers at Google.

Now that’s remarkable, that gives me pause.